The Duplicate Content Debate

Today, I’m going to build some backlinks for my website. Here’s how I’m going to do it. I’m going to write a fantastic article. Then I’m going to take that article and submit to my top 20 favorite article directories. What’s that? You don’t think it’s a good idea? Well I do.

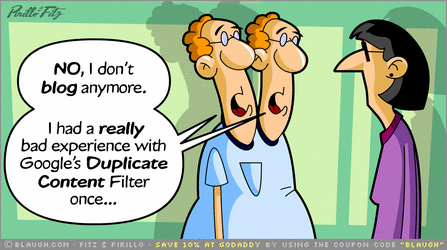

“B-b-b-but what about the duplicate content penalty?!” I can already hear you saying. “Won’t all that duplicate content cause Google and Matt Cutts to give your website an old fashioned Google-style smackdown?”

Hear ye, hear ye. Matt Cutts hath spoken, and spoketh many times hath he on the gnarly subject of duplicate content, what it is, what it isn’t, and what happens to those who breaketh his divine words (for those unititiated, Matt Cutts is a senior software engineer at Google who answers questions about Google’s algorithm on his personal blog and is generally referred to as Lord Cutts by SEO practitioners). So what exactly HAS Matt Cutts said on the topic of duplicate content? (the Lord Cutts thing is a joke, by the way) On Febuary 1, 2008, this post appeared on Matt Cutts’ blog that, for me, clears up the issue of duplicate content for all time. It’s worth a read if you have a minute.

For those who DON’T feel like reading it, however, I shall summarize. Every content that exists in more than one place on the internet is considered duplicate content. When the Googlebot crawls these pages it tags and identifies them as duplicate content. So what happens to this so-called duplicate content?

The theoretical answer (according to Matt Cutts anyway) is de-indexing. That means that the Google algorithm will determine which version of the content is the authority version and devalues or de-indexes the other versions. Is this really the case though? As an illustrative example, I suggest you go to Google and search for “Link Building: Is it Better to have an Ugly Duckling or a Beautiful Swan?”. How many indexed results do you find? This is an article written by a colleague of mine around 2 years ago. He published it to his blog and then syndicated it heavily, publishing it to numerous article directories and allowing re-publishing rights to many independent webmasters. How has Google responded? It has undoubtedly de-indexed and devalued at least SOME of the duplicate copies. For every one that is still up, however, there is a back-link with anchor text pointing to a target page. Back-links have been established.

This makes one point very clear. The only way the duplicate content ‘penalty’ could affect you is if you host the article on your own website as well. Notice in the example I used previously that Thefreelibrary.com is outranking my colleague’s personal website. Google has decided that website has the most authority related to his article and it is now outranking his original article source. For this reason, a duplicate content “best practices” policy should also ensure that content used onsite and offsite should be different. We here at the office would never use the same content on a client’s website to build their back-links. As the back-links themselves go, however, we’re happy sumbitting to dozens of article hosting websites. The very worst that could happen is that a few of them become de-indexed.

To summarize:

Duplicate content can never be totally eradicated. It’s a normal part of online content creation. News sources are the best example of this. How many instances of a single Associated Press article do you think exist on the internet at any one given time?

Because duplicate content is so natural, it can’t be ‘punished’. There is no ‘penalty’ for duplicate content. The worst that can happen is a de-indexing or devaluation of the content (which doesn’t even happen all the time).

So it therefore follows logically: Duplicate content is totally safe to use when constructing article backlinks, as long as you don’t use it on your own website (oh and by the way, it’s really bad manners to submit duplicate content as guest-blog posts).

I hope this clears up some of the confusion about duplicate content. Any further questions can be directed to me in the comments, and I’d be happy to answer them.

Now, if you’ll excuse me, I have some back-links to build. Now where did I put that automatic article submitter…?

This is a Guest post :

David Fishman, blogger and search engine marketer lives and works in Atlanta, GA. He is an employee at Response Mine Interactive, a digital marketing agency offering online marketing solutions to new customer acquisition. His hobbies include link building, SEO, and operating his personal blog, where he writes about how to make sushi. Much thanks to ZK for this guest-post opportunity.

Contact us if you want to guest post on this blog. Image Credit: IM Idea

19 comments

Google’s Algorithm is too much powerful that it will decide the duplicate content of blogger and it will also get the info about who duplicated from whom, so better do not perform such actions

Thanks for the summary. I know many bloggers that are scared to death about duplicate content. I agree with your strategy of submitting to multiple article directories but I want to add that you have to careful with EzineArticles as they only want original content. I guess you can submit there first and then when the article gets accepted submit to the other directories.

Ezinearticles does have a very strict policy about duplicate content, the right way to work with Ezinearticles is the way you’ve mentioned ” you can submit there first and then when the article gets accepted submit to the other directories”

I prefer not to submit duplicate content to Ezinearticles or Buzzle. They both have rather stringent duplicate content policies and I would prefer my accounts not get banned (particularly Ezinearticles, where I’ve worked hard to build up a solid account and have a number of articles that rank highly for long-tails for some of my other websites).

If I want to submit a duplicate article to one of those websites, I just ‘spin’ it a little bit. I like to use http://www.spinprofit.com because it’s free but the paid services that come with something like Unique Article Writer, My Article Network, or any major SEO membership packages (SENuke, Web2Mayhem) will also work if you have them or you’re interested in trying them.

Really great guest post David! From my experience in today’s day and age, duplicate content is fine, I see too many websites copying other articles, and what not, and still getting good rankings + traffic. So I say, yup go for it and get those backlinks!

It definitely clears up the myths and misconceptions about duplicate content, David. I’m glad you raised these points up.

At one time, we would submit the same article to 2-3 blogs. All three would stay in the search results, then after a short period, Google would select one of the three to remain in it’s index. Goes to show how those folks on DigitalPoint sell links by placing your blog entry on their 100-200 blogs to get a couple hundred backlinks are probably not worth anything.

Colleen, my advice for just about anything for sale on Digital Point or Warrior Forum is: don’t.

Half the time, the people on there don’t have a clue about proper SEO practices, as evidenced by the inane questions and equally inane answers.

The blind leading the blind, if you ask me 🙂

(apologies to any DP or WF regulars)

I hear ya. I think the only thing DP is good for these day is finding writers. They come cheap enough to justify the editing required to use the writing!

I think Google should have a better ranking algorithm on duplicate content. My website was copied before and they rank higher than me just because I don’t really promote my site. It’s frustrating.

If you don’t really promote your site, why were you hoping to rank at all? 🙂

Fascinating how this topic never dies. Yours is another very clear explanation that ought to put it to bed until Lord Cutts says differently.

Now the one thing I did not know was about posting it on your own site, I have always made sure the content was on my site first, now I’ll have to change that.

Great post, it cleared a lot of confusion I had about duplicate content. Thanks for sharing it with us.

So, does it means that it’s 100% ok to submit duplicate content on other sites?

spinning your articles would help to get your articles indexed by google

Spinning your article is also a great way to do the same amount of work but protect yourself.

David, this is something that has been on my mind a lot lately as I’ve pondered the pros and cons of article directories and so forth. You have addressed all of my questions too I think.

In terms of article spinning, I have repurposed some of my old stuff and republished it on my own site but never really thought about submitting it to directories until reading this. Sounds like a good idea. BTW, does anyone know if there is a list anywhere of directories who don’t mind dupe content?

Thanks!

David, I’ve got a question for you re: dupe content. What about online newsrooms? Oftentimes online newsrooms host the exact same content, in the form of press releases, that press release distribution sites republish. In this case it seems plausible that Google wouldn’t de-index the site that hosted or originated the release or the press release site. What has been your experience with this?